Many of our participants expressed concerns about automated or AI features that would make decisions for them and wanted greater transparency over algorithms on social platforms.

Show decisions that automated systems have made

Where possible, let people see why automated systems have made the decisions they have. For example, if content is recommended, provide a way for people see why that content was recommended. Similarly, if an automated system has blocked messages from people (as the recommendation Give people agency over who can message them without forcing matching advises), people should be able to have visibility into this.

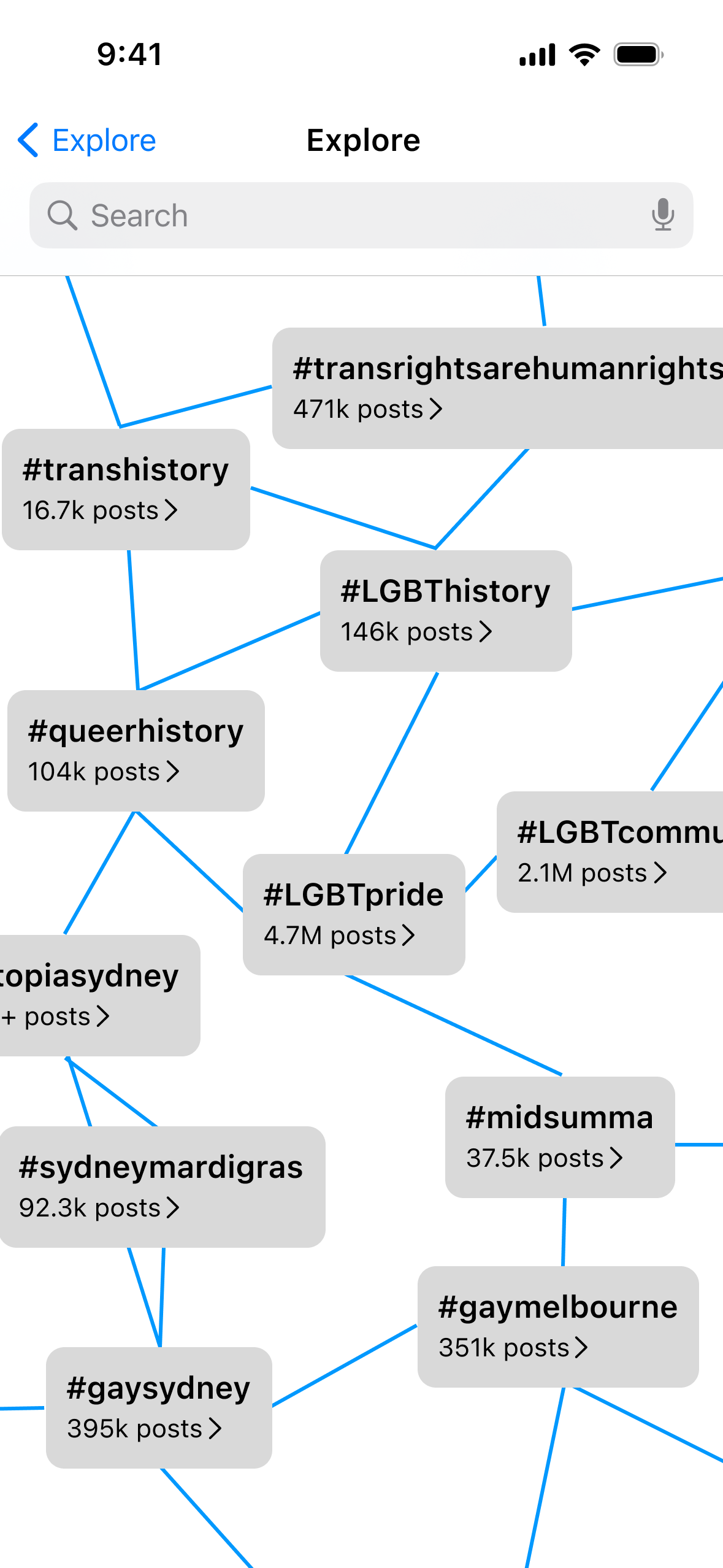

Although not the intention of the designs from the concept Suggested Topics, participants suggested that the design of the topic graph (see right) could be a good way for them to explore the relations between the topics social platforms had identified as of interest to them.

Offer options for adjustments or manual review

Providing transperancy over what decisions automated and AI systems have made is great, but people also need a way to fix things if they think a mistake has been made. Offering options for people to adjust settings based might help them fix any issues although a manual review option should also be provided in cases where it issues are more complex.