One tension surfaced from evaluating many of the safety related concepts was that many participants thought they'd greatly improve their experiences, while others had concerns about accuracy of automated systems or did not want such features personally. To address such tensions, we suggest people are given agency over the ways that automated safety features are enforced when others interact with them.

Give people options for how to deal with NSFW content

Automated safety features that block unsolicited NSFW media and messages could greatly improve peoples' experiences, however, this does not mean that all conversations ought to be sanitised. Some people may want to enforce restrictions on what can be sent to them, while others may be less concerned. To balance this, we recommend designing to nudge people to not send messages that may be inappropriate or confronting by default, while allowing people to either enforce, or disable, blocking messages according to their preferences for anyone that contacts them, might strike a compromise between safety and imperfect algorithmic detection, while not forcing spaces to be sanitised for those who do not want that.

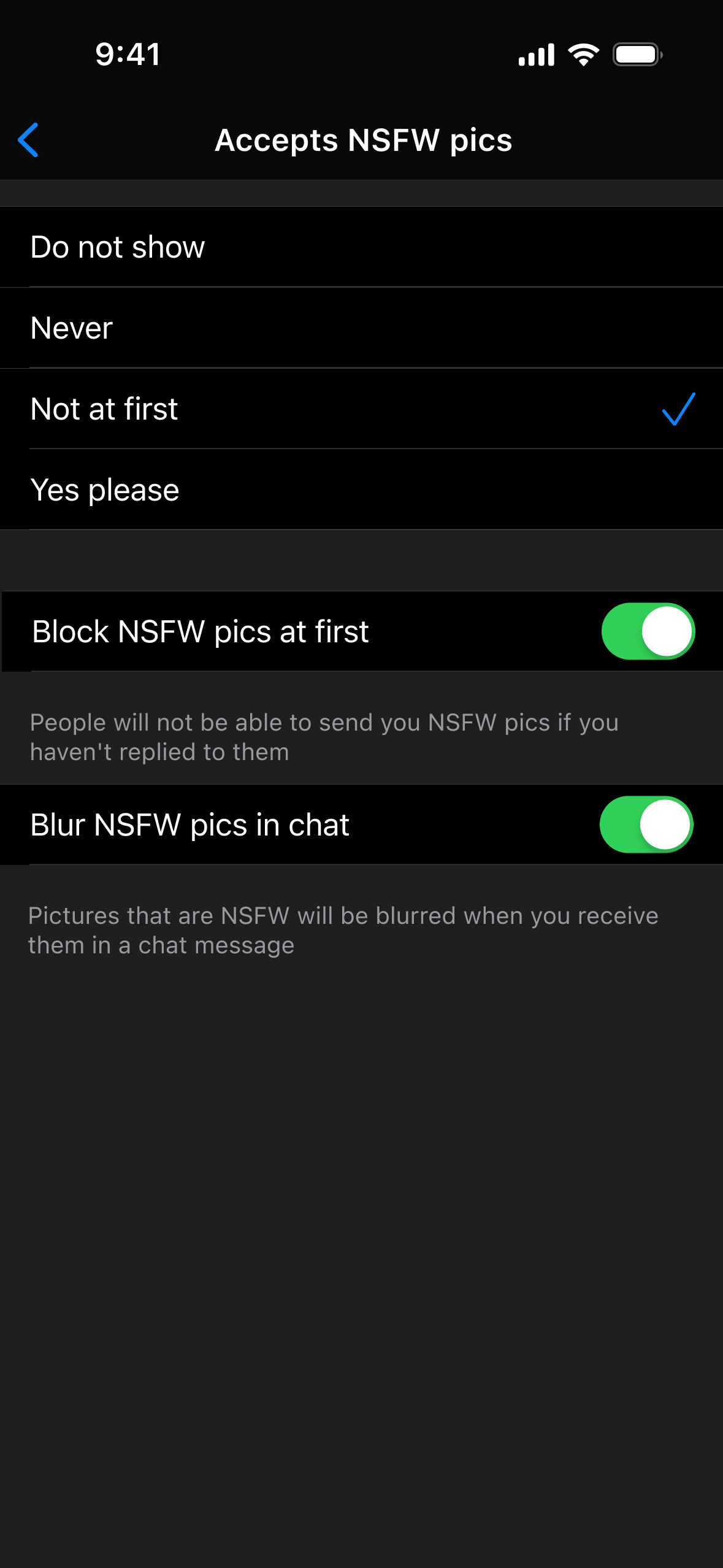

The mockup on the right shows an example of how people can be given options. Beyond letting people indicated whether they want to receive NSFW content (as Grindr currently allows), it also lets one say that NSFW pictures should be blocked or blurred. For those who do not mind receiving such images, they can be disabled, but for those who want more control, this provides a way to avoid unwanted or unexpected viewing of NSFW content.

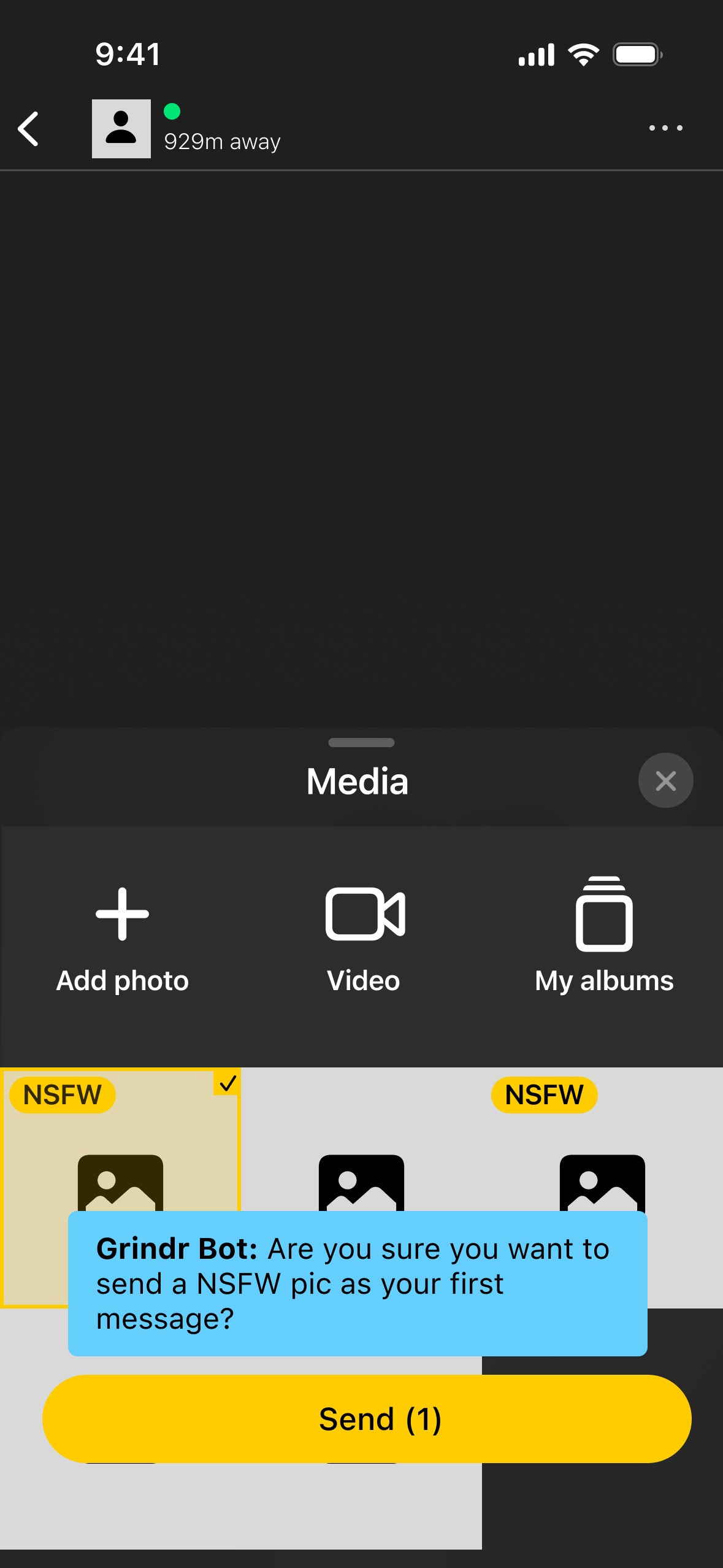

The mockup on the far right shows an example how someone might be nudged when they try to do something that may be inappropriate.